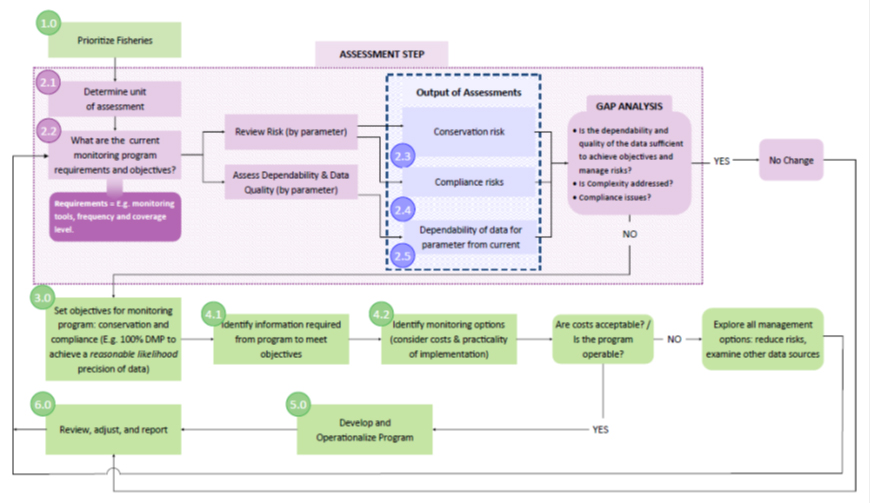

Six steps to implement the fishery monitoring policy

Six steps to implement the fishery monitoring policy

(PDF, 276 KB)

Purpose: to assess whether the monitoring program in a fishery and the data collected are sufficient to meet the objectives of the monitoring program. The assessment will consider a number of factors including the fishery risks, data needs and required data dependability. The assessment will identify any gaps in the monitoring program to be addressed.

Step 1: Prioritize fisheries for assessment (Fig.1, s.1.0):

Fisheries are selected based on factors such as the degree of conservation or compliance risk, treaty or international obligations, market access requirements, among others. Over time the monitoring programs in all fisheries will be assessed.

Step 2: Assess the monitoring program:

Background / Context (Fig.1, s. 2.1) – Select the fishery unit for the assessment (e.g., the fishery, vessel, gear, area combination) and document background information such as the target and bycatch species, management regime, and other information.

Objectives and monitoring (Fig. 1, s. 2.2) – Capture fishery objectives and how they are being monitored. For example, a fishery objective might be to stay within the TAC, with reporting and monitoring via logbooks, hail-in, and Dockside Monitoring Program (DMP).

Risk screening (Fig. 1, s.2.3/2.4) – Review the risk to target, bycatch, and habitat based on the consequence and likelihood of negative outcomes. Examine the occurrence of non-compliance. This highlights which risks need to be most closely monitored, informs whether current monitoring is adequate for the risk level, and indicates the required level of data dependability.

Analysis of data dependability and quality (Fig. 1, s.2.5) – Assess the dependability and quality of data estimates either qualitatively or quantitatively. Monitoring that yields estimates with a low likelihood of approximating true values (e.g., estimated catch significantly underestimates actual catch) could be considered of “low dependability”, especially if the limit reference point is being approached.

Gap analysis– “Gaps” in monitoring programs, e.g., unreliable or missing data on key risk factors, emerge following dependability and risk analyses. For example, if the risk of impact to bycatch species is “high”, yet the existing monitoring is focused exclusively on target species catches, this could indicate a monitoring gap. Reviewing dependability and risk together shows where data quality does not align with risk level. Fisheries with complex management regimes (e.g., multispecies fisheries, international fisheries, ITQ fisheries, etc.) tend to carry higher risks and require greater data dependability. To address such gaps, monitoring must be robust, perhaps through a comprehensive mix of fisher-dependent and fisher-independent tools, and/or near-complete coverage of fishing activity.

Step 3: Set monitoring objectives (Fig.1, s.3.0):

Monitoring objectives establish goals for monitoring programs. They respond to dependability and risk concerns by guiding the selection of monitoring requirements (methods and coverage levels) that address the gaps identified in Step 2. For example, a monitoring objective could prescribe minimum coverage levels to achieve required data dependability for monitoring bycatch species. Another could require consistent reporting of fishing location to better address risk factors related to habitat. Compliance monitoring objectives are needed to ensure monitoring programs address concerns related to illegal activity.

Step 4: Specify monitoring requirements (Fig.1, s. 4.0):

Tools and coverage (Fig.1, s. 4.1) – Together with fish harvesters, managers need to document what data collection tools, and tool coverage levels, will provide reliable data to achieve the monitoring objectives. It might be a combination of fisher-dependent tools, e.g., logbooks, hails, etc., and fisher-independent tools, e.g., observers, video monitoring, etc. Coverage might be partial, i.e., a survey approach, or complete, i.e., a census approach.

Considering costs (Fig.1, s. 4.2)– Where changes are recommended, managers and fish harvesters are to seek ways to minimize cost. Some options for minimizing cost include:

- using new cost effective technologies e.g., electronic log books or video monitoring,.

- Redirecting monitoring effort from one fishery to another,

- Investigating alternative sources of data, or

- taking a closer look at the risk itself to see how it might be mitigated.

Ultimately, monitoring must provide sufficient rigor to support fishery objectives. Where costs are deemed prohibitive, all options must be explored, including moving to a more conservative management approach e.g., reducing the TAC.

Step 5: Operationalize the monitoring program (Fig.1, s.5.0):

Managers must commit to a series of clear, achievable Action Steps, specifying in regional workplans what actions must be taken to update the monitoring program. For example, “engage science on minimum data requirements for estimating catches of species X”.

Step 6: Review the monitoring program periodically and re-assess as required (Fig. 1, s.6.0):

At the fishery level, through the post-season review process, DFO will evaluate whether the fishery’s revised monitoring program achieves its monitoring objectives. If required, the program must be adjusted, e.g., to address changes in the risk profile of the fishery. DFO will also track progress to implement the Fisheries Monitoring Policy using the Sustainability Survey for Fisheries. This will use metrics to report which policy Steps have been implemented. Finally, over the long term, DFO will evaluate whether the policy objectives have been achieved across fisheries at regional and national levels.

Fig. 1: Process for implementing the fisheries monitoring policy

Description

Figure 1 is a flow chart showing how the six policy implementation steps fit together. Step 1.0, prioritizing fisheries for assessment, leads into the assessment steps, steps 2.1 to 2.5. The assessment begins with identifying the fishery and monitoring objectives and describing the existing monitoring program. The assessment then screens the fishery against a set of pre-determined risk factors, and describes the dependability of the monitoring program in terms of its ability to help managers achieve the objectives. These two outputs – risk and dependability – are used to perform a gap analysis where needed improvements to monitoring are identified. The results of the gap analysis are used to develop new monitoring objectives and to set monitoring requirements.

- Date modified: